How to Design High IOPS Servers for Database Heavy Workloads?

When transaction latency begins fluctuating under concurrency, when checkpoints suddenly stretch longer than expected, or when replication starts lagging during peak hours, the limitation is rarely abstract. It is architectural. In production environments running mission critical databases, storage behavior determines stability long before CPU utilization becomes the bottleneck. Designing High IOPS servers is not a hardware upgrade decision. It is a structural engineering exercise.

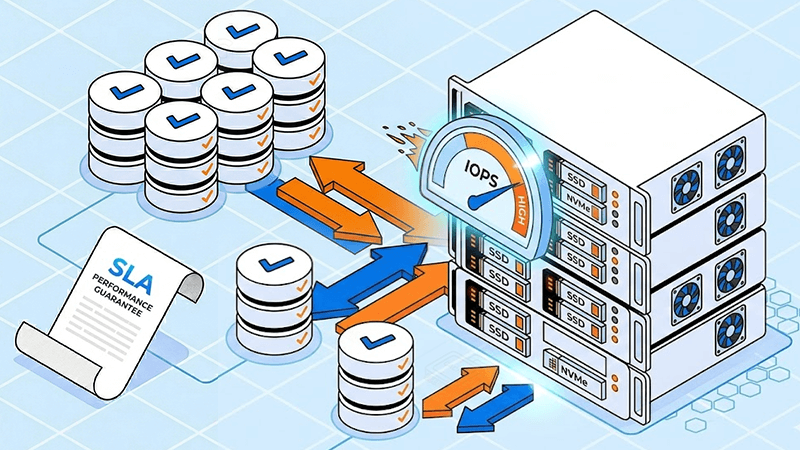

For organizations operating PostgreSQL, MySQL, Microsoft SQL Server, MongoDB, Redis, or analytics engines such as ClickHouse, the underlying disk subsystem directly influences user experience, SLA adherence, and long term operational cost. A high performance database server must be built around predictable I/O performance, not theoretical maximum throughput.

Database Workloads Stress Storage Differently

Database engines are I/O pattern generators. Every transaction can trigger write ahead log commits, data page updates, index modifications, checkpoint flushes, and background maintenance processes. Unlike media streaming or backup systems that move large sequential blocks, OLTP and high concurrency systems generate sustained small block random reads and writes.

IOPS measures how many discrete read or write operations can be processed per second. Throughput measures the total amount of data transferred per second. For database server optimization, especially in transaction heavy environments, IOPS and latency consistency matter more than peak megabytes per second.

A system delivering 20,000 random 8KB operations per second may only move around 160MB per second in total throughput, yet it demands extremely high IOPS capability to prevent queue buildup. Once disk queues increase, I/O wait rises, CPU sits idle, and application response time degrades. This is where a server for database heavy workloads either sustains performance or begins to destabilize.

Why NVMe Is Foundational for High IOPS Architectures

Mechanical drives are physically limited by seek time and rotational latency. Traditional HDD rarely exceed 150 IOPS per disk under random load. SATA SSD improved this significantly, but NVMe redefined the performance boundary.

NVMe communicates directly over PCIe lanes, enabling parallel command queues and reducing protocol overhead. For databases executing thousands of concurrent operations, this parallelism is critical. Lower latency per operation translates directly into faster commit times and more stable concurrency scaling.

However, deploying NVMe alone does not guarantee resilience. High IOPS server configuration must consider controller behavior, endurance class, RAID topology, cooling, and workload isolation. Enterprise storage for databases prioritizes sustained consistency over synthetic benchmark numbers.

Understanding NAND Behavior in Database Environments

Flash memory cannot overwrite data in place. Data is written in pages but erased in blocks. When a page changes, the SSD controller must relocate valid data, erase the block, and rewrite both old and new content. This internal process generates write amplification.

Database clusters, SaaS platforms, financial systems, and virtualization hosts generate highly random write patterns. Random workloads increase garbage collection frequency inside the SSD, elevating internal NAND activity beyond host write volume. Without adequate over provisioning and free space management, latency spikes can appear during background cleanup cycles.

Enterprise NVMe SSD server for database deployments should maintain sufficient unused capacity, typically 10 to 20 percent, to reduce block recycling pressure. Proper endurance modeling must account for host write volume multiplied by observed write amplification factor. Ignoring this results in inaccurate lifecycle forecasting and potential premature degradation.

RAID Topology and Write Distribution

RAID selection influences both performance and durability. RAID 0 maximizes speed but removes redundancy. RAID 1 mirrors data for safety but does not improve write throughput. RAID 10 stripes across mirrored pairs, distributing write load while preserving resilience.

For database heavy workloads, RAID 10 remains a preferred architecture because it balances write distribution and fault tolerance. NVMe RAID 10 spreads random writes across multiple drives, reducing localized NAND wear and maintaining consistent latency under concurrency.

Separating transaction logs from primary data volumes further stabilizes write behavior during checkpoints and recovery operations. A high performance database server architecture must consider write path isolation where possible.

Memory, CPU, and Profiling Before Provisioning

Memory reduces read pressure by enlarging buffer pools, but writes always reach persistent storage. Increasing RAM cannot compensate for insufficient write IOPS capacity.

CPU scaling supports parallel execution, background maintenance tasks, and replication threads. Multi core configurations are typically more valuable than peak single core frequency in high concurrency systems.

Before provisioning infrastructure, workload profiling is essential. Measure peak and average IOPS, read write ratio, block size distribution, checkpoint intervals, and replication synchronization behavior. Tools such as pg_stat_io, track_io_timing, performance schema, and slow query analysis provide actionable insight.

Provisioning based on observed workload rather than marketing specification prevents oversizing or underperformance.

Managing Latency During Maintenance Events

Even when the working dataset fits in memory, maintenance tasks trigger disk activity. Checkpoints flush dirty buffers. WAL files sync to disk. Backups scan entire tables. Index builds create temporary write bursts.

These operations can temporarily multiply IOPS demand. Engineering strategies include tuning checkpoint frequency, staggering maintenance windows, allocating dedicated NVMe arrays for WAL, and monitoring disk queue depth.

Consistently elevated I/O wait percentages indicate that storage capacity is approaching saturation. Database stability is tested during these stress events rather than during idle periods.

Scaling Strategy and Lifecycle Modeling

Vertical scaling increases IOPS, CPU, and memory within a single node. Horizontal scaling distributes load across replicas or shards. Read replicas offload analytical queries. Sharding reduces write contention but increases architectural complexity.

Capacity planning must incorporate realistic annual write volume. For example, if host writes equal 250TB per year and measured write amplification equals 1.4, NAND writes approximate 350TB annually. With a 1750TBW endurance rating, expected usable lifespan approaches five years. Without amplification modeling, replacement timelines may be miscalculated.

Database server optimization requires planning not only for performance but also for predictable hardware lifecycle.

Dataplugs NVMe Infrastructure and Database Reliability

Performance instability often originates from shared infrastructure contention rather than raw hardware limitation. Multi tenant environments can introduce unpredictable burst I/O behavior that destabilizes latency sensitive database applications.

Dataplugs designs NVMe dedicated servers within enterprise grade data centers across Asia Pacific, emphasizing predictable IOPS delivery for transactional systems. Dedicated resource allocation eliminates noisy neighbor interference, ensuring storage operations are not shared across unrelated workloads. Configurable NVMe RAID 1 and RAID 10 deployments distribute write pressure evenly, supporting sustained concurrency while preserving NAND endurance.

Redundant power infrastructure and structured cooling systems maintain thermal stability under continuous write activity, preventing throttling during peak database events. Premium low latency network connectivity supports replication, clustering, and distributed database traffic without introducing cross network bottlenecks.

For organizations operating SaaS platforms, financial transaction engines, analytics pipelines, or time series databases, this dedicated NVMe environment provides a stable foundation aligned with high IOPS server configuration principles. Infrastructure predictability strengthens both performance consistency and lifecycle management.

Continuous Monitoring and Operational Discipline

Storage performance is dynamic. Monitor disk queue depth, latency percentiles, replication lag, I/O wait percentage, and temporary file generation. Reset and compare statistics when evaluating performance changes. Optimization is continuous rather than one time.

Organizations that treat IOPS capacity as a managed operational metric maintain stability during growth. Those that rely solely on vendor specification sheets often encounter limitations during seasonal demand spikes.

Conclusion

Designing High IOPS servers for database heavy workloads requires coordinated engineering across NVMe architecture, RAID topology, endurance modeling, memory allocation, and workload profiling. Enterprise storage for databases must deliver consistent low latency under sustained concurrency rather than short term benchmark peaks.

A properly engineered NVMe SSD server for database environments supports stable checkpoint behavior, predictable replication performance, balanced flash wear, and scalable growth capacity.

For organizations seeking dedicated NVMe infrastructure engineered for database stability across Asia Pacific, connect with the Dataplugs team via live chat or at sales@dataplugs.com.