How Can You Optimize Storage Latency for Transaction-Intensive Applications?

When transaction commits begin to hesitate under peak load, when database locks accumulate despite low CPU usage, or when API response times fluctuate during write bursts, the constraint is rarely visible in application logs. It lives in the storage layer.

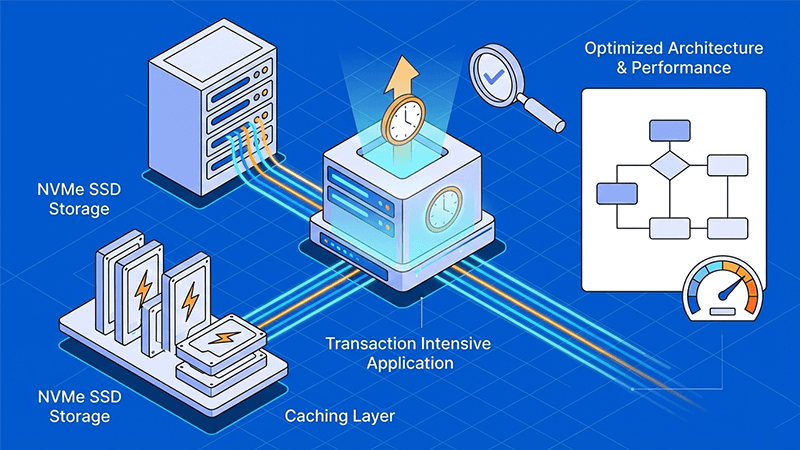

For transaction intensive applications such as payment gateways, trading platforms, SaaS databases, ERP systems, and real time analytics engines, storage latency directly shapes user experience. Microseconds matter. A few milliseconds of delay per I/O compounds into queue buildup, replication lag, and inconsistent throughput.

To optimize storage latency, infrastructure must be engineered around I/O behavior, not just capacity numbers.

Latency, IOPS, and the Reality of Transaction Workloads

Latency is the time required to complete a single read or write request. In OLTP systems, where thousands of small random operations occur per second, latency drives transaction speed more than raw bandwidth.

Understanding the difference between IOPS and throughput is essential. IOPS measures operations per second. Throughput measures data volume per second. Transaction intensive applications depend on high IOPS with consistently low latency, not just large MB/s numbers.

This is where SSD vs HDD latency becomes decisive. Mechanical disks introduce rotational and seek delays measured in milliseconds. NVMe SSDs eliminate physical movement and communicate directly over PCIe lanes, reducing response time to microseconds.

Under concurrency, that architectural difference determines whether databases scale cleanly or stall under lock contention.

Why Shared Storage Environments Create Variability

Many cloud or virtualized environments rely on shared storage pools. Even when marketed as storage optimized, multi tenant architectures introduce variability from competing workloads.

Latency spikes often appear during burst traffic, backup windows, or neighboring tenant activity. For transaction systems that require deterministic performance, unpredictability is unacceptable.

True storage latency optimization requires isolation at the hardware layer.

NVMe Storage Performance and Parallel I/O

NVMe storage performance is fundamentally built for parallelism. Unlike SATA SSDs, NVMe supports thousands of command queues, allowing multi core CPUs to issue concurrent I/O without bottlenecks.

In production database environments:

- Small 4K random reads maintain stable response times

- Queue depth scales without disproportionate latency growth

- Write heavy workloads distribute efficiently across RAID arrays

For high performance storage for databases such as MySQL, PostgreSQL, MongoDB, or Redis, NVMe in RAID 1 or RAID 10 configurations smooths write amplification and reduces checkpoint latency spikes.

Hardware capability alone, however, is not enough. Configuration, monitoring, and architectural alignment complete the picture.

Balancing Queue Depth, I/O Size, and Caching

Optimizing storage latency involves tuning:

- I/O block size

- Thread concurrency

- Queue depth

- Caching layers

Smaller I/O sizes increase IOPS potential but demand efficient scheduling. Excessive queue depth can inflate latency even on fast NVMe systems if limits are exceeded.

Caching further reduces storage pressure. In memory caching layers, such as Redis or database query caching, can deliver responses 10 to 100 times faster than disk access. Proper TTL and eviction policies prevent stale data while protecting primary storage from unnecessary reads.

Caching reduces load. NVMe ensures remaining load executes predictably.

Architecture Matters More Than Disk Speed

Data modeling and partitioning also influence storage latency. Horizontal sharding distributes write load. Read replicas offload reporting traffic. Index optimization minimizes full table scans.

Consistency models influence coordination overhead. Strong consistency adds synchronization latency. Eventual consistency improves responsiveness but must align with business logic.

Storage latency optimization is therefore multi layered: hardware, file system, database engine, and caching strategy must work together.

Dataplugs All Flash NVMe Dedicated Servers

For transaction intensive applications where predictability is critical, infrastructure isolation is as important as disk speed.

Dataplugs deploys all-flash NVMe dedicated servers engineered specifically for sustained high IOPS workloads. Unlike shared cloud volumes, these are single tenant systems with no oversubscription. Disk performance is not shared, and I/O isolation eliminates noisy neighbor interference.

Key characteristics include:

- Enterprise grade NVMe SSD arrays designed for consistent low latency

- RAID 1 and RAID 10 configurations to distribute write pressure

- Direct hardware access without virtualization storage contention

- Data center grade power redundancy and cooling stability to prevent thermal throttling

- Asia Pacific deployment locations for reduced regional network latency

Because NVMe storage performance is delivered on dedicated hardware, queue depth behavior remains stable under parallel workloads. Database checkpoints, replication bursts, and write intensive events maintain predictable latency distribution.

For organizations running financial systems, SaaS platforms, blockchain nodes, or ecommerce infrastructure, this architectural consistency is often more valuable than theoretical burst metrics advertised in shared environments.

Dataplugs infrastructure is designed for long term stability, not short term benchmark spikes.

SSD vs HDD Latency in Modern Deployments

HDD still serves archival and sequential workloads. But for transaction databases, mechanical limitations impose hard ceilings on random I/O performance.

In 2025 production environments, HDD should not be used for primary OLTP systems. All-flash NVMe infrastructure is the standard for low latency storage solutions supporting transaction intensive applications.

Practical Steps to Optimize Storage Latency

- Profile I/O patterns to identify block size and concurrency.

- Deploy NVMe based storage for random read write workloads.

- Use RAID 10 for write heavy environments.

- Implement intelligent caching strategies.

- Monitor latency percentiles, not just averages.

- Avoid oversubscribed shared storage when determinism is required.

Each layer contributes incremental improvement. Together, they deliver measurable performance stability.

Conclusion

To optimize storage latency for transaction intensive applications, infrastructure must align with workload behavior. High IOPS alone is not sufficient. Consistent low latency under concurrency is the real objective.

All-flash NVMe architecture, intelligent caching, workload aware partitioning, and hardware isolation form the foundation of storage latency optimization. When deployed on dedicated infrastructure engineered for deterministic performance, transaction systems scale predictably.

For organizations across Asia Pacific seeking reliable high performance storage for databases without shared environment variability, connect with the Dataplugs team via live chat or at sales@dataplugs.com.