How can you scale infrastructure for open source AI agents across users?

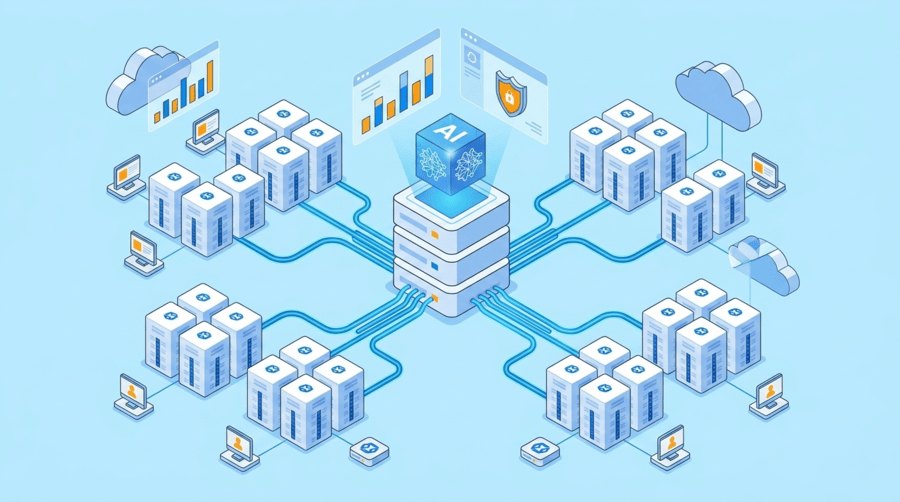

Once AI agents move beyond testing, the real challenge is not only whether the model responds well. It is whether the infrastructure can keep user sessions separate, control tool access, handle growing workloads, and stay stable as more people rely on it. What works for one user in a development environment often starts to break when the same agent needs to support many users, departments, or business workflows at the same time.

When teams start thinking seriously about open source AI agents, the issue is no longer just how to run them. It becomes how to run them safely, consistently, and efficiently across users without creating performance bottlenecks or operational risk. That is where infrastructure design starts to matter much more than most early-stage projects expect.

Why production environments change the problem

A single-user agent is relatively simple. A production agent is not. Once the workload includes multiple users, different access levels, memory states, API calls, and long-running tasks, the system begins behaving more like a distributed application than a lightweight assistant.

A growing environment usually needs to manage:

- concurrent workloads across users

- isolated memory and session handling

- tool and API permissions

- retries and workflow continuation

- logs, traces, and observability

- network consistency across regions

Isolation should be planned early

When AI agents are shared across users, isolation is one of the first things that needs to be designed properly. One user’s context should not leak into another user’s workflow. One workload should not consume so many resources that it disrupts everyone else. One tool connection should not expose unnecessary access across the environment.

A dedicated server can help here because it gives more direct control over compute allocation, network rules, storage behavior, and environment separation. That does not solve every issue automatically, but it creates a better foundation for applying policies correctly.

Orchestration becomes necessary once agents do more than answer prompts

Many production agents do not finish their work in one interaction. They may need to wait for files, retrieve information from other systems, call APIs, trigger approvals, or continue work later after another event occurs. This means the environment needs to support more than just inference.

A more reliable deployment often includes:

- asynchronous job handling

- durable task execution

- persistent state tracking

- queue-based workflows

- recovery after interruption

Notes: If your agent workflows involve uploads, approvals, or delayed actions, leave enough storage and memory headroom for queues, logs, and workflow state from the start.

Shared context needs structure, not unlimited access

Giving agents access to more information does not always improve outcomes. In many production environments, too much unfiltered context creates slower responses, higher costs, and more inconsistent behavior. It can also introduce security and governance issues if users or agents can reach data they do not actually need.

A better model is to separate context into distinct layers such as short-term session context, workflow state, shared knowledge, and long-term logs. This makes it easier to decide what belongs in real-time prompts and what should stay in supporting systems.

Tips: Fast storage fills up quicker than expected once embeddings, logs, cached results, and workflow records start accumulating.

Network quality affects user experience and agent reliability

AI agents often depend on APIs, databases, internal systems, vector stores, and monitoring tools. Once those dependencies spread across regions, routing quality starts to affect reliability just as much as compute power does.

For businesses serving users in Hong Kong, Mainland China, or wider Asia, this can be especially relevant. Dataplugs is worth considering here because it provides dedicated servers in Hong Kong, Tokyo, and Los Angeles, backed by BGP network design and CN2 Direct China connectivity options that can help support more stable cross-region operations.

Tips: Match server location to both user traffic and connected systems, not just to where the team is based.

Security and monitoring need to grow with the workload

As agent environments expand, permissions and visibility become more important. Agents should use scoped credentials, role-based permissions, and access controls tied closely to what they actually need to do.

Monitoring matters just as much. Teams need to know what tools were called, what data was accessed, where latency increased, and when behavior starts to drift. Infrastructure protections such as firewall controls, DDoS mitigation, and WAF policies also help protect the service around the agent.

Dataplugs can support this kind of deployment with services such as Anti-DDoS Protection, Firewall Protection, WAF, and backup-related options that help teams create a more controlled production setup.

Notes: Before launch, confirm where your logs will be stored and how long they will be retained, because monitoring overhead grows quickly in active agent environments.

FAQ

What is the biggest challenge when scaling AI agents across users?

The biggest challenge is usually not the model itself. It is keeping sessions, permissions, memory, and workloads properly separated while maintaining stable performance under concurrent usage.

Why does a multi-user AI agent deployment need orchestration?

Because many production agents need to handle delayed tasks, retries, API calls, and event-based actions. Without orchestration, workflows become difficult to track and recover when something fails.

How important is server location for AI agent performance?

It matters more than many teams expect. Better routing between users, APIs, databases, and internal systems can improve response times and reduce workflow failures.

When should a business consider dedicated servers for AI agents?

Usually when agents begin serving multiple users, handling sensitive workflows, or depending on predictable compute, storage, and network performance that shared environments may not provide consistently.

Conclusion

Scaling infrastructure for open source AI agents across users is really about building a controlled environment before the workload becomes too large to manage comfortably. Isolation, orchestration, context structure, network stability, and access control all become more important once agents move into shared production use.

For businesses exploring infrastructure that can support this kind of growth, Dataplugs offers dedicated server options, regional deployment choices, fast storage configurations, and practical security services that fit well with production AI agent operations. To learn more, contact the Dataplugs team via live chat or email at sales@dataplugs.com.