1Gbps vs 10Gbps Dedicated Servers: Which One Fits Your Real‑World Use Cases?

Infrastructure teams rarely debate bandwidth in theory. The conversation usually begins when systems start behaving differently under real traffic. A platform that performs smoothly in testing may slow down once thousands of users connect or when large files begin transferring across the network. At that point, choosing between a 1Gbps dedicated server and a 10Gbps dedicated server becomes a practical infrastructure decision rather than a simple specification upgrade.

For engineers planning scalable platforms, the difference between these two bandwidth tiers directly affects how applications handle concurrency, how quickly data moves across systems, and how stable services remain during traffic spikes. This is why organizations often conduct a careful dedicated server bandwidth comparison before deploying production environments.

Understanding Dedicated Server Bandwidth and Throughput

Bandwidth represents the maximum rate at which a server can transmit data through its network interface. A 1Gbps server hosting environment supports roughly one gigabit per second of transfer, which translates to about 125MB per second under ideal conditions. A 10Gbps dedicated server increases this capacity to approximately 1.25GB per second, allowing significantly larger volumes of data to move across the network.

However, real world performance depends on more than just the port speed. CPU processing power, storage throughput, network interface cards, switch infrastructure, and routing paths all influence how efficiently data moves through a server. Because of these variables, evaluating dedicated server bandwidth requirements should always consider how applications behave under real workloads rather than relying purely on theoretical limits.

Dedicated Server Bandwidth Comparison in Real Environments

When comparing a 1Gbps vs 10Gbps dedicated server, the differences typically appear in throughput capacity, connection concurrency, and latency stability during heavy load. A higher bandwidth connection allows significantly larger amounts of data to move at once, which becomes especially noticeable when transferring large files or handling continuous streams of information.

Concurrency also improves with higher bandwidth. A 10Gbps connection allows a server to support far more simultaneous users without network congestion. As a result, latency remains stable even during traffic spikes because the network interface is far less likely to reach its limits. These improvements are most visible in environments where applications regularly process large volumes of data.

Where 1Gbps Dedicated Servers Work Well

Despite increasing demand for faster infrastructure, the standard 1Gbps dedicated server still supports a wide range of production workloads effectively. Many applications do not generate constant high bandwidth traffic and instead rely on smaller transactions such as database queries, API requests, or page loads.

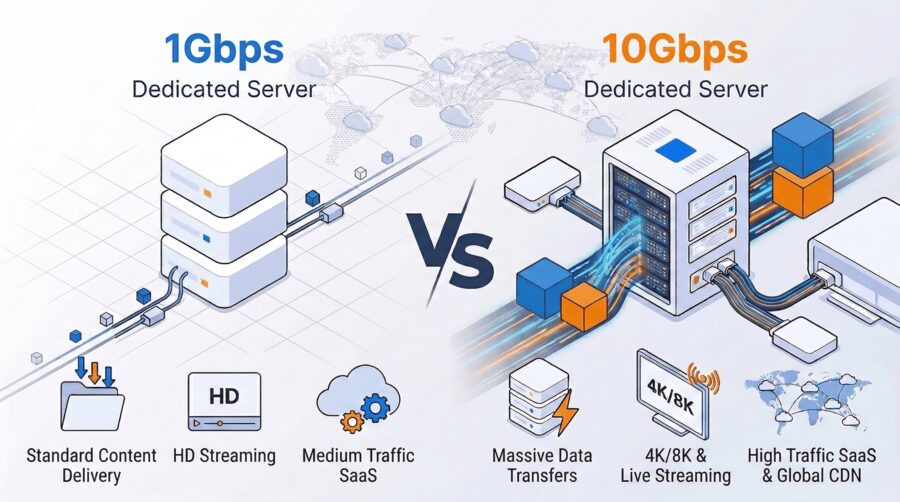

For these types of systems, processing power and application optimization usually have a greater impact on performance than network speed alone. Common workloads that perform well on 1Gbps infrastructure include corporate websites, ecommerce platforms with moderate traffic, internal APIs, development environments, and SaaS platforms in their early growth stages. In these scenarios, the network rarely becomes a performance bottleneck.

When a 10Gbps Dedicated Server Becomes Necessary

Some applications generate sustained high data transfer volumes where network capacity directly affects user experience. In these environments, a 1Gbps connection can quickly reach its limits and introduce delays.

Several common 10Gbps server use cases illustrate where higher bandwidth becomes essential. Video streaming platforms require continuous delivery of high bitrate media, especially when large numbers of viewers watch simultaneously. Software distribution platforms also demand high bandwidth because game updates, application installers, and media libraries often exceed tens or hundreds of gigabytes.

Cloud storage and backup systems rely heavily on network throughput as well. Replicating large datasets between locations or performing off site backups becomes significantly faster when higher bandwidth connections are available. Other workloads that benefit from high bandwidth include online gaming servers with large player bases, content delivery networks distributing media assets, big data processing clusters transferring datasets internally, and high traffic SaaS platforms supporting thousands of concurrent users.

How Network Bottlenecks Affect Applications

Network congestion rarely appears as an obvious system error. Instead, it introduces gradual performance issues that affect application responsiveness. When bandwidth approaches its limits, data packets must wait in queues before being transmitted. This delay leads to slower page loads, buffering video streams, and inconsistent application responses.

These issues are most noticeable during peak traffic periods. A server that performs normally under average load may struggle when thousands of users access the platform simultaneously. Understanding when to use 10Gbps server infrastructure helps organizations prevent these problems before they impact production services.

Unmetered Bandwidth and Cost Predictability

Bandwidth planning also involves understanding how hosting providers measure network usage. Some services use metered bandwidth models, charging customers based on the total volume of data transferred each month.

Unmetered bandwidth plans operate differently. Instead of charging for data usage, organizations pay a fixed monthly rate based on port speed such as 1Gbps or 10Gbps. As long as traffic stays within the physical limits of the network port, data can be transferred continuously without additional charges. This approach is widely used by streaming platforms, content delivery services, and large scale SaaS applications because it provides predictable infrastructure costs.

Hardware Requirements for High Bandwidth Servers

Network speed alone does not guarantee performance. To fully utilize high bandwidth infrastructure, the rest of the server hardware must also support the required throughput. Modern high bandwidth dedicated server deployments typically combine multi core processors capable of handling large numbers of concurrent connections, large memory capacity for caching and application processes, and NVMe SSD storage capable of extremely fast read and write speeds.

Enterprise network interface cards designed for 10GbE connectivity also play a critical role. Without balanced hardware architecture, internal bottlenecks such as slow storage or limited CPU capacity can prevent a server from utilizing its full network potential.

Why Dedicated Infrastructure Improves Network Stability

Shared hosting environments often introduce performance variability because multiple tenants compete for the same hardware and network resources. When neighboring workloads generate heavy traffic, available bandwidth can fluctuate.

Dedicated servers eliminate this contention by isolating CPU resources, storage throughput, and network capacity for a single deployment. Infrastructure providers such as Dataplugs deploy NVMe SSD dedicated servers within secure Tier III data centers across the Asia Pacific region. With isolated hardware resources and high throughput network architecture, organizations can maintain stable performance even during significant traffic spikes. This type of environment supports SaaS platforms, ecommerce systems, fintech services, and data intensive workloads that require consistent infrastructure reliability.

Identifying the Right Bandwidth for Your Workload

Organizations evaluating 1Gbps vs 10Gbps dedicated server options typically begin by analyzing real traffic patterns. Several indicators suggest that higher bandwidth may be necessary, including network usage consistently approaching capacity, large file transfers slowing application performance, traffic spikes affecting response times, or rapid growth in user concurrency across multiple regions.

When these patterns begin to appear, upgrading to 10Gbps infrastructure can significantly improve both performance and scalability.

Conclusion

Choosing between a 1Gbps and 10Gbps dedicated server ultimately depends on how your applications generate and move data. Many platforms run efficiently on 1Gbps infrastructure, while data intensive services such as streaming platforms, SaaS systems, and large scale downloads benefit from the higher throughput of 10Gbps connectivity.

For organizations planning scalable deployments, dedicated NVMe server environments help maintain stable performance as workloads grow. To explore infrastructure options aligned with your requirements, connect with the team via live chat or at sales@dataplugs.com.